Leaders in Artificial

Intelligence/Machine

Learning and Computer Vision

Kitware at GEOINT

As Silver sponsors of GEOINT 2021, we are especially pleased to be a part of this year’s program. In addition to exhibiting (you can find us at Booth #815), Kitware will be presenting a training session and giving two lightning talks. Visit the Events Schedule section for more information.

Watch this video to learn more about our computer vision capabilities or read about them here.

Would you like to hear more about our capabilities?

Schedule a meeting with us to discuss how you can leverage our computer vision

capabilities for your project.

Events Schedule

Training Session: How to Find Objects with Limited Labels

Thursday, October 7 from 7:30 – 8:30 AM

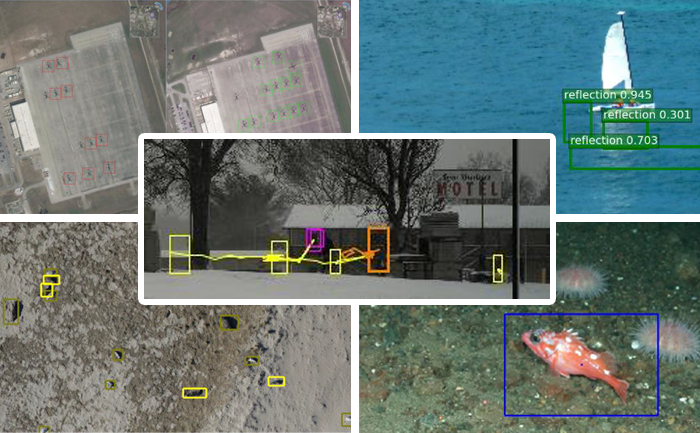

This session will address the issue that while artificial intelligence and machine learning (AI/ML) continue to revolutionize analysis and decision making for warfighters, the need for massive amounts of labeled data hinders the usefulness of this technology. The expense of labeling and the difficulty of finding instances of rare objects has become a barrier for many. In this tutorial, we will describe both unsupervised and supervised methods that attack the problem from multiple perspectives. We will discuss open source software that will enable analysts to create accurate detectors for rare objects with only a handful of examples, including VIAME, developed by Kitware with NOAA and DoD funding. The training session will be presented by Anthony Hoogs, Ph.D., vice president of AI at Kitware.

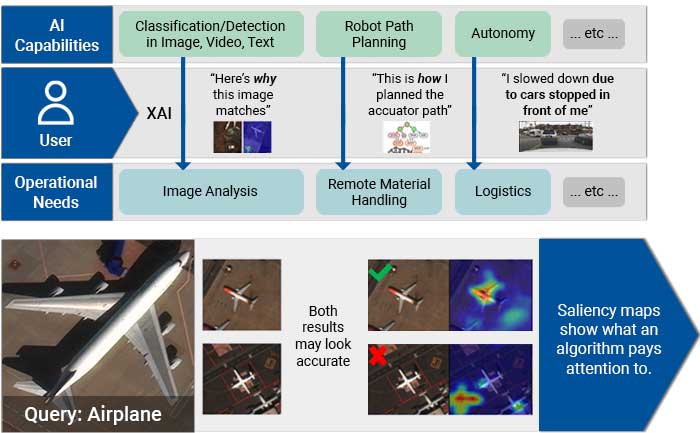

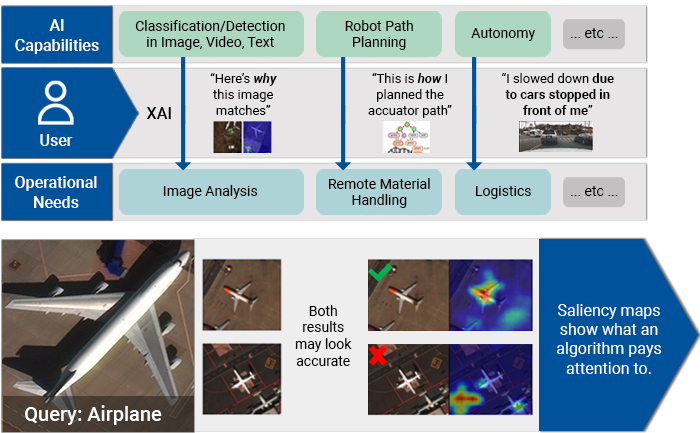

Lightning Talk: Explainable Artificial Intelligence (XAI)

This talk will address the issues that result from a lack of human understanding as to how machine learning-based systems make their decisions. XAI attempts to help end users understand and appropriately trust ML systems. This lightning talk will include an overview of the field of XAI, highlighting common problems and techniques. It will cover Kitware’s work on explainable, interactive content-based image retrieval in the satellite image domain. We will also discuss our ongoing work in creating an open-source XAI toolkit, which collects data, software, and papers from the four-year DARPA XAI program into a common, searchable framework. This lightning talk will be presented by Anthony Hoogs, Ph.D., vice president of AI at Kitware.

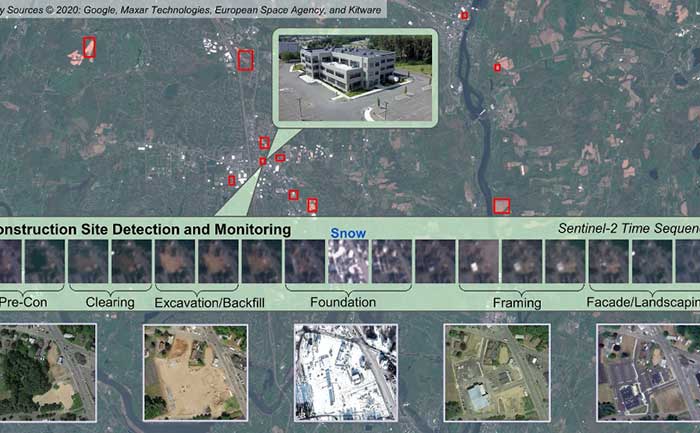

Lightning Talk: Preliminary Kitware Results on the IARPA SMART Program (Space-based Machine Automated Recognition Technique)

This lightning talk will summarize Kitware’s progress in building a system to search through enormous catalogs of satellite images from various sources to find and characterize relevant change events as they evolve over time. Our team is addressing the problems of harmonizing data over time from multiple satellites into a consistent data cube. We are exploiting this data cube to find man-made change – initially construction sites. Our system, called WATCH (Wide Area Terrestrial Change Hypercube), will further categorize detected sites into stages of construction with defined geospatial and temporal bounds and will predict end dates for activities that are currently in progress. This lightning talk will be presented by Matt Leotta, Ph.D., a technical leader on Kitware’s Computer Vision Team.Kitware’s Computer Vision Focus Areas

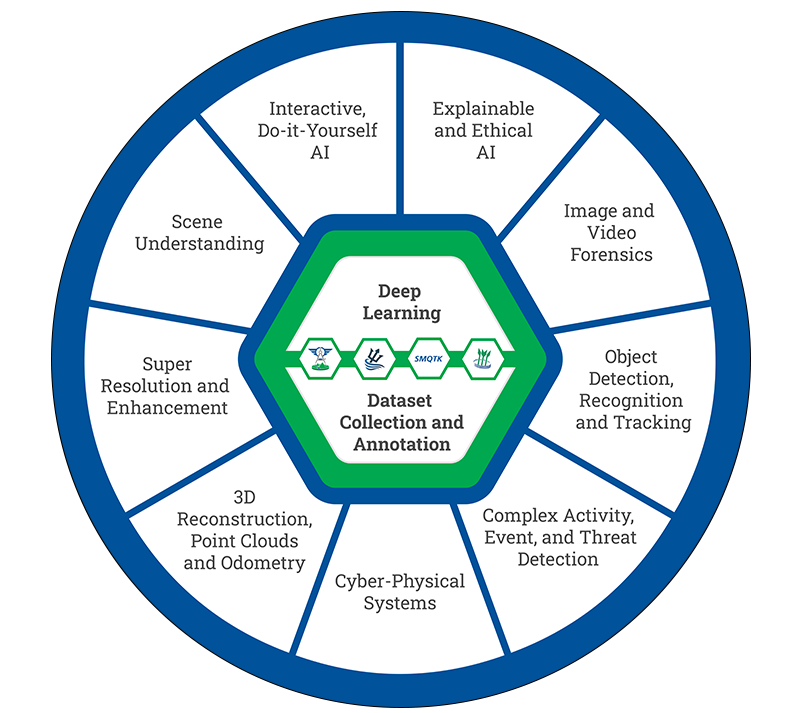

Machine Learning

Through our extensive experience in AI and our early adoption of machine learning, we have made dramatic improvements in object detection, recognition, tracking, activity detection, semantic segmentation, and content-based retrieval. Our expertise focuses on hard visual problems, such as low resolution, very small training sets, rare objects, long-tailed class distributions, large data volumes, real-time processing, and onboard processing.

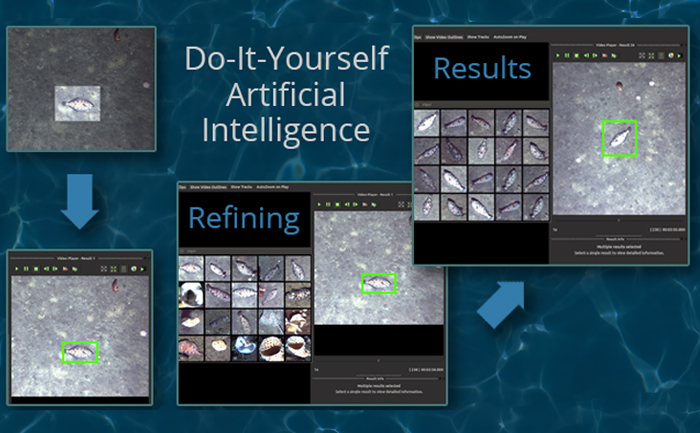

Interactive Do-It-Yourself AI

Using Kitware’s interactive DIY AI toolkits, users can rapidly build, test, and deploy novel AI solutions without having expertise in ML or computer programming. You can easily train object classifiers using interactive query refinement, without drawing any bounding boxes. Our toolkits also allow you to perform customized, highly specific searches of large image and video archives. Our DIY AI toolkits have both scientific and defense applications and are provided with unlimited rights to the government.

Explainable and Ethical AI

Kitware has developed powerful tools to explore, quantify, and monitor the behavior of deep learning systems. Our team is making deep neural networks explainable and robust when faced with previously-unknown conditions. We are stepping outside of classic AI systems to address domain independent novelty identification, characterization, and adaptation to be able to acknowledge the introduction of unknowns. We also value the need to understand the ethical concerns, impacts, and risks of using AI. Therefore, Kitware is developing methods to understand, formulate and test ethical reasoning algorithms for semi-autonomous applications.

Object Detection, Recognition and Tracking

Our video object detection and tracking tools are the culmination of years of continuous government investment. Our suite of tools can identify and track moving objects in many types of intelligence, surveillance, and reconnaissance data (ISR), including video from ground cameras, aerial platforms, underwater vehicles, robots, and satellites. These trackers are able to perform in challenging settings and address difficult factors, such as low contrast and resolution, moving cameras, and high traffic density, through specialized techniques.

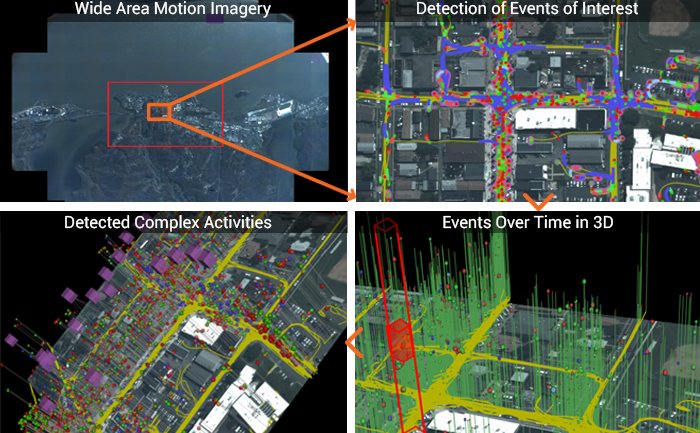

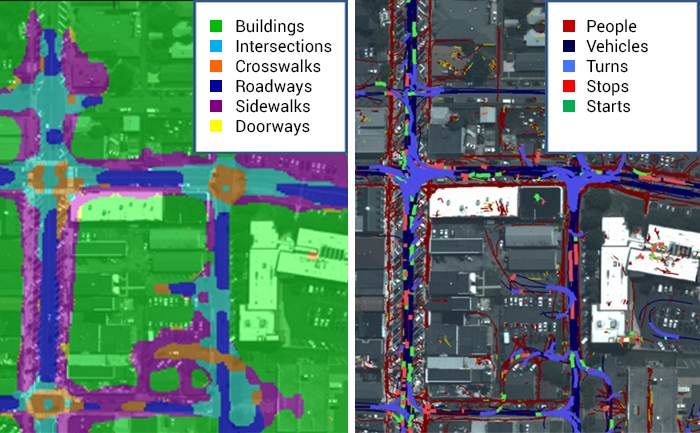

Complex Activity, Event, and Threat Detection

Kitware’s tools recognize high-value events, salient behaviors and anomalies, complex activities, and threats through the interaction and fusion of low-level actions and events, even in dense cluttered environments. Operating on tracks from WAMI, FMV, MTI or other sources, these algorithms characterize, model, and detect actions. Many of our tools feature alerts for specific behaviors, activities, and events. This allows you to detect threats in massive video streams and archives, despite missing data, detection errors, and deception.

Cyber-Physical Systems (CPS)

Kitware has created state-of-the-art cyber-physical systems that perform onboard, autonomous processing to gather and extract critical data. Using computer vision and deep learning technology, our sensing and analytics systems can overcome the challenges of an ever-changing physical environment. They are customized to solve real-world problems in aerial, ground, and underwater scenarios. These capabilities have been field-tested and proven successful in programs funded by DARPA, AFRL, NOAA, and more.

Dataset Collection and Annotation

The growth in deep learning has increased the demand for quality, labeled datasets needed to train models and algorithms. Kitware has developed and cultivated dataset collection, annotation, and curation processes to build powerful capabilities that are unbiased and accurate. Kitware can collect and source datasets and design custom annotation pipelines, and we are able to annotate image, video, text and other data types. Kitware also performs quality assurance that is driven by rigorous metrics to highlight when returns are diminishing.

Image and Video Forensics

We are continuously developing algorithms to automatically detect image and video manipulation that can operate on large data archives. These advanced deep learning algorithms give us the ability to: detect inserted, removed, or altered objects; distinguish deep fakes from real images; and identify deleted or inserted frames in videos in a way that exceeds human performance. We continue to extend this work through multiple government programs to detect manipulations in falsified media exploiting text, audio, images, and video.

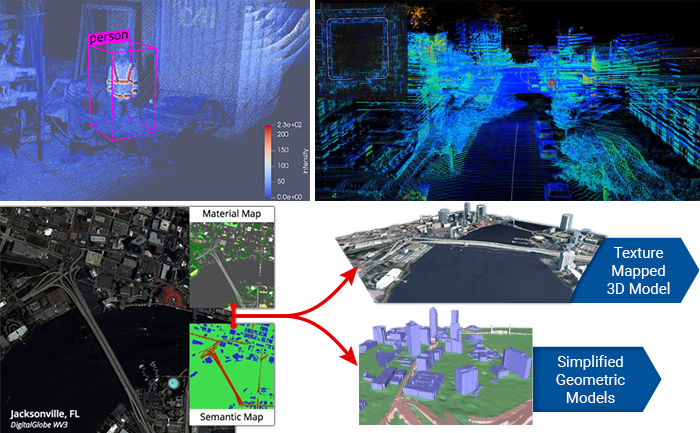

3D Reconstruction, Point Clouds, and Odometry

Kitware’s algorithms can extract 3D point clouds and surface meshes from video and images with or without metadata or calibration information. Our methods estimate scene semantics and 3D reconstruction jointly to maximize the accuracy of object classification, visual odometry, and 3D shape.

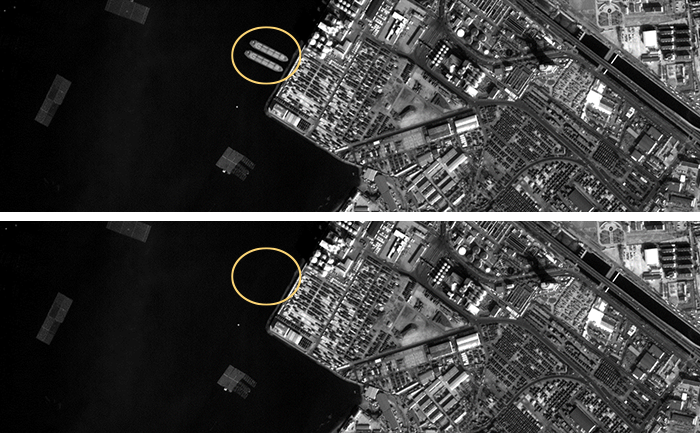

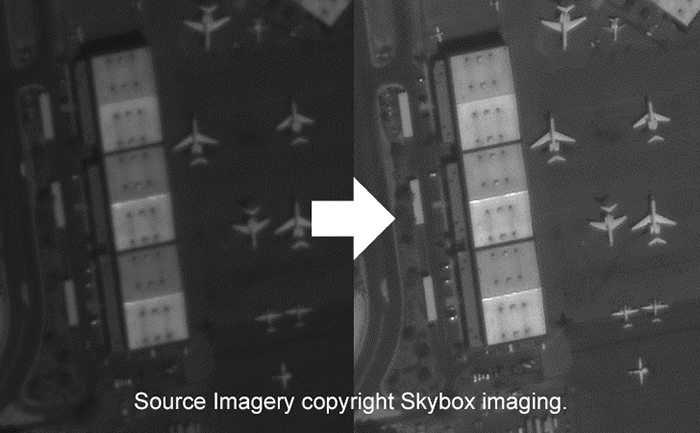

Super Resolution and Enhancement

Kitware’s super-resolution techniques enhance single or multiple images to produce improved images. We use novel methods to compensate for widely spaced views and illumination changes in overhead imagery, particulates and light attenuation in underwater imagery, and other challenges in a variety of domains. The resulting higher-quality images enhance detail, enable advanced exploitation, and improve downstream automated analytics, such as object detection and classification.

Scene Understanding

Kitware’s knowledge-driven scene understanding capabilities use deep learning techniques to accurately segment scenes into object types. In video, our unique approach defines objects by behavior, rather than appearance, so we can identify areas with similar behaviors. Through observing mover activity, our capabilities can segment a scene into functional object categories that may not be distinguishable by appearance alone.Kitware’s Artificial Intelligence/Machine Learning

Open Source Platforms

VIAME is an open source, do-it-yourself AI system for analyzing imagery and video for general use, with specialized tools for the marine environment.

TeleSculptor is a cross-platform application for aerial photogrammetry.

SMQTK is an open source toolkit for exploring large archives of image and video data that enables users to easily and dynamically train custom object classifiers for retrieval.

KWIVER is an open source toolkit that solves challenging image and video analysis problems.