AI in ParaView and LidarView: Point cloud Deep Learning frameworks integration with Python plugins

Since ParaView 5.6, plugins can be written easily with only Python code. Python plugins allow combining ParaView point cloud processing abilities and the huge open source python code base to run various deep learning models based on pytorch or tensorflow on custom point clouds.

LidarView v4.1.5+ and VeloView v5+ are built upon ParaView 5.6, they can be downloaded here:

Let’s see how to use it with an example with 3D objects detection.

The example below is based on OpenMMLab’s MMDetection3d and uses the python plugin code available in this repo. It has been tested on Ubuntu 18.04.

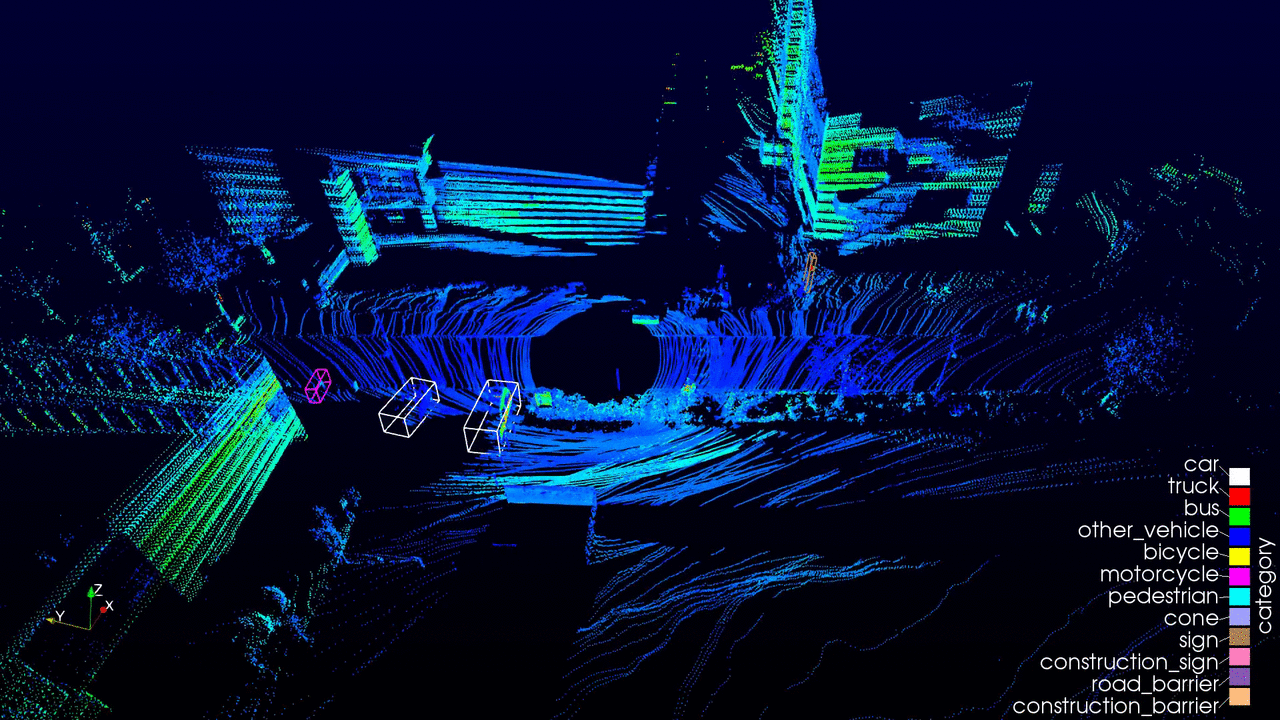

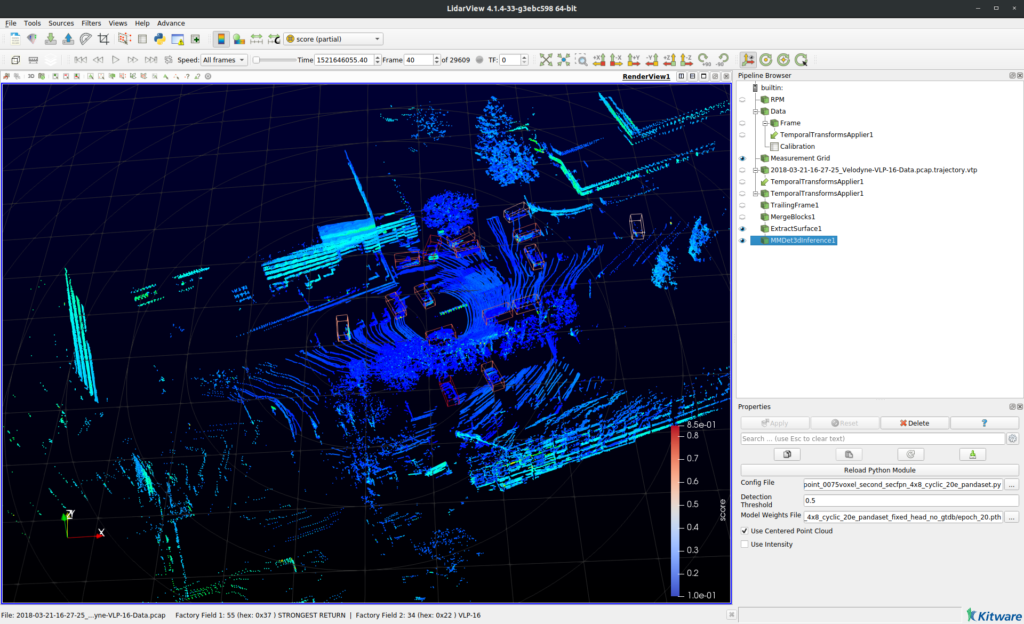

What it looks like

What it does

This plugin add a filter that:

- instantiates an MMDetection3d model,

- converts it’s paraview PolyData input to a format that the model can read, using the VTK-NumPy integration,

- passes the transformed point cloud to the MMDetection3D model,

- reads the models output,

- displays the detected bounding boxes.

How to get a trained model (please make sure that its licence is compatible with your use):

- The model used in the video is a centerpoint architecture trained on the PandaSet dataset,

- Other trained models can be found in MMDetection3d’s Model Zoo,

- You can also train your own model using MMDetection3d.

See mmdetection3d_inference.py if you want to have a closer look at the implementation.

Some more guidance for python filters implementation can be found here.

How to run it in LidarView

In order to get this plugin in lidarview (with a version based on paraview 5.6):

- install MMDetection3d on the python3.7 that is used by LidarView by following the instructions here,

- download the python plugin here,

- launch LidarView,

- if not active yet, activate the advanced mode in order to be able to load the plugin using by ticking Advance Features in the Help menu,

- load the mmdetection3d_inference python plugin using the Advance > Tools (paraview) > Manage Plugins… menu,

- if you want the plugin to be automatically loaded when opening LidarView, tick the Autoload box.

In order to use it on your data:

- load your point cloud,

- add the filter to your pipeline by typing Ctrl + space and selecting MMDet3DInference,

- in the properties panel, select the model files and parameters:

- Config file: the .py file with the MMDetection3D configuration file (see MMDetection3d documentation),

- Detection Threshold: Threshold on the detection score (used to remove noise from low-confidence detections),

- Model weights File: the .pth file with the weights of a trained model,

- Use Centered Point Cloud: tick the box to recenter the point cloud around its median point. This could improve performances in case the sensor is moving or its position is unknown (as models are often trained on zero-centered point clouds). This does not affect the points positions in LidarView,

- Use Intensity: tick the box to pass the intensity to the model (this is model dependant, tick it only if the model has been fed with intensity values during training, this will raise an error otherwise).

Update Q1 2025: A new blogpost on this topic has been written and contains more up to date information and tutorial. You may find it here: https://www.kitware.com/3d-point-cloud-object-detection-in-lidarview/.

Since then VeloView has become obsolete : Last version is 5.1 (based on ParaView 5.9, built in October 2022). Please use LidarView instead.