Enhancing Software Quality with CI in the Cloud

Good software relies on software quality practices such as Continuous Integration (CI) to maintain high standards of reliability, efficiency, security, maintainability, and size. These practices lower costs and reduce risk by identifying problems earlier, giving organizations the agility and confidence to try out new architectural directions, features, and dependency changes.

At Kitware, we have a long history of using version control and continuous testing systems to attain measurably high software quality. Our development practices have evolved by integrating popular quality tools and systems, allowing developers and managers to track metrics for large, complex projects being developed across geographically distributed teams.

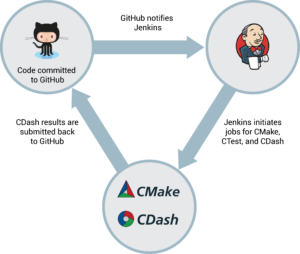

This article will discuss how we have deployed Jenkins, a popular open source CI tool, with the CMake Tool Suite on multiple cloud computing platforms to create a completely open source testing system that alerts developers to potential problems as the code is developed. The projects discussed in this blog are large C++ projects, although many of the tools and techniques can be used for other languages.

Automated Testing with CI

Kitware has enhanced several customer projects–including Google’s Tango, the NLM’s ITK, and Toyota Research Institute (TRI) / MIT’s Project Drake–by using Jenkins as our CI and automation task runner. Each time a commit is pushed to the version control repository, Jenkins runs a full build and a suite of unit and integration tests on the head of the branch, as well as on the branch after a simulated merge into the master branch.

We’ve also added specific Jenkins jobs which perform algorithm evaluation. Any branch that touches algorithmic code can have these jobs run in addition to the test suites, resulting in quantitative measurements displayed on a quality metrics dashboard. Thus the decision of whether to merge a code branch is informed not only by the stability of the codebase, but also by its fielded performance quality against relevant data sets.

Once a branch has been merged into master, a full test cycle is once again run to catch subtle integration problems related to merge-to-master ordering. Finally, nightly test suites and algorithm evaluation test jobs are run against the master branch, resulting in a standard time series of data that can be tracked and analyzed.

Measuring Quality with Dashboards

The problem with using Jenkins or Travis with large, complex projects is the long build logs. The standard output is unstructured, making it cumbersome to parse and difficult to compare changes over time, with no clear way to analyze the health of the codebase.

At Kitware, we have tackled this problem using our expertise in software build quality, data analysis, and visualization to extract metrics from the logs in CMake build environments running on CI infrastructure. These cloud based, cross-platform builds use CDash to turn Jenkins output into easy-to-read dashboards which monitor codebase quality and alert developers to declining project health.

The CMake Tool Suite

CMake is a cross-platform build tool that provides C++ compilation portability similar to the compile-once-run-everywhere of Java, Python and C#, and allows developers to use the same build tool and files for all platforms.

CTest is a language agnostic testing harness, distributed with CMake, that allows the usage of most common testing frameworks. Tests are created as a part of the project build, and are executed as a part of the build cycle.

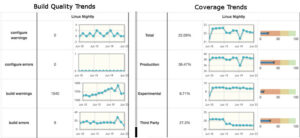

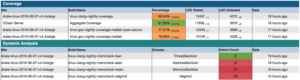

CDash is a web dashboard that aggregates, analyzes, and displays summary and detailed views of build and test results on a daily and historical basis, keeping builds organized by submitter, platform, architecture, compiler, and compiler settings.

The CMake suite of tools support large and complex software projects that can be deployed in most major cloud based environments. We have created a build process in Amazon Web Services (AWS) with a C++ and MATLAB codebase for TRI/MIT; for Google we have built this tooling in the Google Cloud, naturally; and we have deployed these tools in the Azure cloud for ITK, an open-source C++ project for which Kitware is a main contributor.

After each CMake build, CTest results are communicated to CDash, allowing developers to continually monitor and improve the state of the software system. CDash can identify individual tests that fail, link failing tests against commits to identify the possible source of failures, and present the history of builds. CDash can also update the branch integration record as soon as a test failure is detected by writing a comment in the GitHub pull request – without having to wait for the entire test suite to finish.

In addition to building the software and running all the tests, the CMake suite can integrate with external third party static and dynamic analysis tools such as code coverage, memory error and memory leak detection, thread sanitization, style consistency, and dependency analysis. Although these tools are extremely helpful, they are often difficult to run and even harder to interpret the output. CTest allows a team to easily include these tools in their standard test suites, while CDash clearly displays the results, revealing memory errors like buffer overflows and further enhancing code stability and security.

Tracking these extracted metrics has provided our customers with greater insight into project health. Not only do they show the source of existing and past problems, they document the frequency of those problems to identify trouble spots in the codebase that predict looming issues. This has led to improved accuracy and confidence in estimations, improved communication within technical teams, reproducibly higher quality software, and overall reduced risk for software projects, and perhaps most importantly, reduced stress and anxiety for the whole team.

The ability to extract these metrics for build quality is an important first step in creating a baseline for project health. Watch for the next post in this series, where we will discuss augmenting this baseline with algorithm evaluation and performance metrics.

Want to learn more about how Kitware improves software projects? Let us know in the comments below or contact us directly at kitware@kitware.com.