How Kitware Explored Google Glass

Several months ago, Kitware signed up to participate in the Google Glass explorer program. The program is an arrangement set up by Google to make early prototypes of the Google Glass device available to adventurous testers who are willing to explore its capabilities. In this article, we summarize our experience in becoming familiar with Glass, bringing it to university classes, and performing basic programming tasks.

The first thing we learned about Glass is that it gets linked to a Gmail account. It is through this link that the device obtains access to many Google services including G+, as well as photo and video uploading and sharing capabilities. We created a “Kitware Medical Glass” account in order to keep track of our experiments with the device.You can follow the G+ “Kitware Medical Glass” account at https://plus.google.com/104343368816417715059/posts.

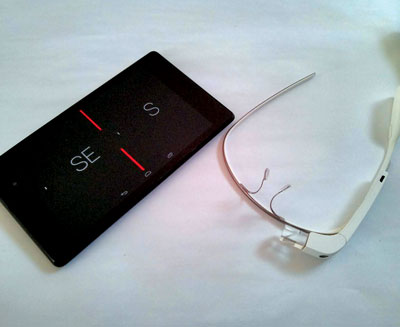

After linking Glass to this account, we set up the device so that it could be connected to the wireless network. To this end, we set the network SSID and password on the Google Glass web page, and it generated a QR code that could then be read by the video camera of Glass. It is also possible to pair Glass to a mobile Android device, such as a Nexus 4 phone or a Nexus 7 tablet, via a Bluetooth connection. One of the more useful functionalities of this pairing is the ability to screencast on the mobile device (in our case, a Nexus 7 tablet) the image that a Glass user sees. This comes in handy when debugging programming and when showing Glass functionalities to a group of people.

Glass paired via Bluetooth to a Nexus 7 tablet, screencasting.

In order to start developing applications for Glass, we installed the Glass Development Kit on Ubuntu Linux 12.10: https://developers.google.com/glass/develop/gdk/quick-start

This, in turn, requires the Android SDK:

http://developer.android.com/sdk/installing/bundle.html

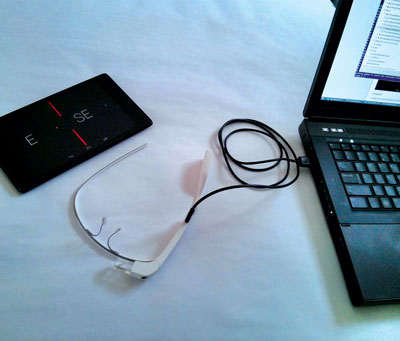

With the Android SDK, it is possible to write Glass applications on a laptop, using the Eclipse IDE, and to upload the generated bytecode to the device via a direct USB cable

connection.

Glass connected via USB cable to a laptop with GDK for uploading generated bytecode.

Glass applications are written in a very similar way to Android applications. The main difference is that special guidelines are provided for the Glass’ UI design, given that the resolution and light conditions of Glass are quite particular.

We started our programming exploration by modifying some of the examples that are available on GitHub (https://github.com/googleglass), including the compass sample application (https://github.com/googleglass/gdk-compass-sample). First, we built it in its original form, uploaded it to Glass, and verified its operation. Then, we ventured to modify the code and posted the intermediate changes in a GitHub repository located at https://github.com/luisibanez/gdk-compass-sample.

The programming process was quite intuitive, and it rapidly became clear that the next steps in our exploration would involve navigating through the reading of sensor parameters, including compass and accelerometer parameters, and using the 2D and 3D graphics libraries that are compatible with the Glass device. Such libraries include the min3D library, which is located at https://code.google.com/p/min3d/.

We shared the Glass experience at various academic events at the State University of New York at Albany and engaged students and faculty in conversations on the social impact of this emerging technology.

We are continuing to explore the use of the device in experimental medical applications, such as interfacing it with electronic medical records systems, which others have also investigated with great promise.

Luis Ibáñez is a Technical Leader at Kitware, Inc. He is one of the main developers of the Insight Toolkit (ITK). Luis is a strong supporter of Open Access publishing and the verification of reproducibility in scientific publications.