ParaView Catalyst Computes Particle Paths In Situ

Rotorcraft, such as helicopters, exhibit cyclic behavior as they fly in their respective wakes. This behavior makes it difficult for analysts to evaluate results from computational fluid dynamics (CFD) simulations. Other factors that make rotorcraft difficult to evaluate include unsteady, vortex-dominated flow; reverse flow; compressibility; yawed flow; and flow separation. The complex flow fields of rotorcraft simulations hinder analysts as they attempt to set accurate initial conditions. In practice, several rotor blade revolutions must occur before the undesired transients that result from the poor initial conditions attenuate out. Due to all of the above complicating factors, the simulations often take long to run.

Particle paths, or path lines, track particles in fluid flows. The paths provide critical information to help analysts understand CFD simulation results. In particular, particle paths allow analysts to better comprehend unsteady flow. Analysts have used particle paths to assess model configurations and flight maneuvers for the HELIcopter Overset Simulations (HELIOS) code. This code stemmed from the Computational Research and Engineering Acquisition Tools and Environments – Air Vehicles (CREATE™-AV) effort of the Department of Defense High Performance Computing Modernization Program (HPCMP).

Although particle paths have offered analysts important insights, the post hoc computation of these paths has historically required significant resources. The computation calls for analysts to save large numbers of files to obtain appropriate fidelity in the output results. Since the input and output of files have often constituted the slowest parts of simulation runs, analysts have rarely saved simulation results for every time step, even on moderately sized problems.

To make matters worse, post hoc computation also calls for analysts to post-process results. To do so, analysts must load files back in from disk. While analysts have found it problematic to load and save data on workstations, they have experienced even more issues on high-performance computing (HPC) machines, as the compute to input/output (I/O) disparity increases.

To reduce file I/O, analysts have utilized in situ computation. In situ computation occurs during simulation runs, so it efficiently uses computer resources. With in situ computation, analysts can access visualization and analysis results from all time steps, and they can produce desired information, potentially without performing any additional post-processing. (Bauer et al. [1] discuss in situ computation.)

To compute particle paths in situ, analysts can employ ParaView Catalyst [2]. ParaView Catalyst uses ParaView as a library. It allows analysts to decide which ParaView functionalities they want to insert directly into parallel simulation codes such as HELIOS. Thus, ParaView Catalyst offers unprecedented production-quality capabilities, and it saves significant computational resources.

ParaView Catalyst did not always contain all of the necessary functionalities to compute particle paths in situ, so the development team worked to add them. This article highlights these functionalities.

In Situ Computation With ParaView Catalyst

To enable ParaView Catalyst to compute particle paths in situ, the development team needed to add four key functionalities to ParaView. These functionalities specify the initial time step for particle path computation, clear the particle history, cache the previous time step, and restart the simulations, respectively.

The team did not, however, want to complicate the computation process in the ParaView graphical user interface (GUI). Accordingly, the team added a special class to ParaView, vtkInSituPParticlePathFilter. The following sections detail the use of the class to compute particle paths in situ.

Specifying the Initial Time Step for Particle Path Computation

As mentioned earlier, rotorcraft simulations like HELIOS can take a non-trivial amount of time to attenuate out poor initial conditions. Analysts need a particle path filter to inject particles into simulation flows after a certain number of time steps to avoid the computation of useless and possibly confusing results. For post hoc analysis, this means that the filter must process data from files with time steps of interest. For in situ analysis, however, the respective filter must inject particle paths and advect them only after a certain time step. The SetFirstTimeStep() method in the vtkInSituPParticlePathFilter class specifies this time step.

Clearing the Particle History

In post-processing particle paths in ParaView, the file I/O cost dwarfs the actual computation cost. Accordingly, the particle path filter outputs the full time-dependent history for each particle for subsequent analysis and visualization. When computing particle paths in situ, file I/O does not pose an issue, since the resident memory already contains all of the information necessary to compute the paths.

With in situ computation, the difficulty stems from high temporal fidelity, as in situ analysis considers every time step, while post hoc analysis typically considers every tenth-or-greater time step. Storing the full history of each particle path can become memory-intensive rather quickly, especially for long-running simulations that contain many injected particles. Accordingly, the SetClearCache() method in the vtkInSituPParticlePathFilter class only stores the current locations of the particle paths in memory.

Caching the Previous Time Step

When ParaView post-processes results, the particle path filter and the pipeline mechanics control the iteration through time steps. When ParaView Catalyst post-processes results, the particle path filter normally only has access to the current time step. In order to compute the time advance of each particle, ParaView Catalyst must cache the previous time step. The vtkInSituPParticlePathFilter class automatically stores this cache.

Restarting the Simulations

HELIOS simulations typically run for several or more days. To extend beyond the limits of HPC queues, analysts will periodically restart their simulations from previously computed time steps. To make the particle path results from in situ computation useful, the vtkInSituPParticlePathFilter class must evaluate the results independent of whether or not the analysts restarted the simulations.

In order to restart, simulations must save information about simulation state. Similarly, ParaView Catalyst must save information about vtkInSituPParticlePathFilter state. When simulations restart, the AddRestartConnection() method in the vtkInSituPParticlePathFilter class uses a reader to load previously saved information about particle state. The vtkInSituPParticlePathFilter class then continues to compute particle paths without any noticeable difference in output.

Results

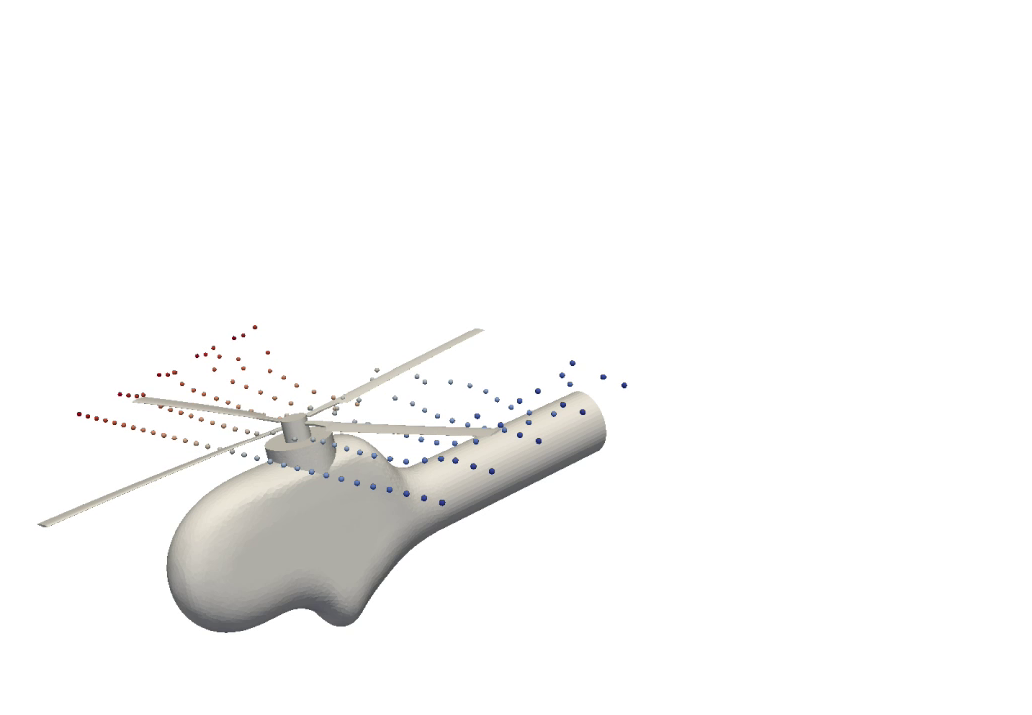

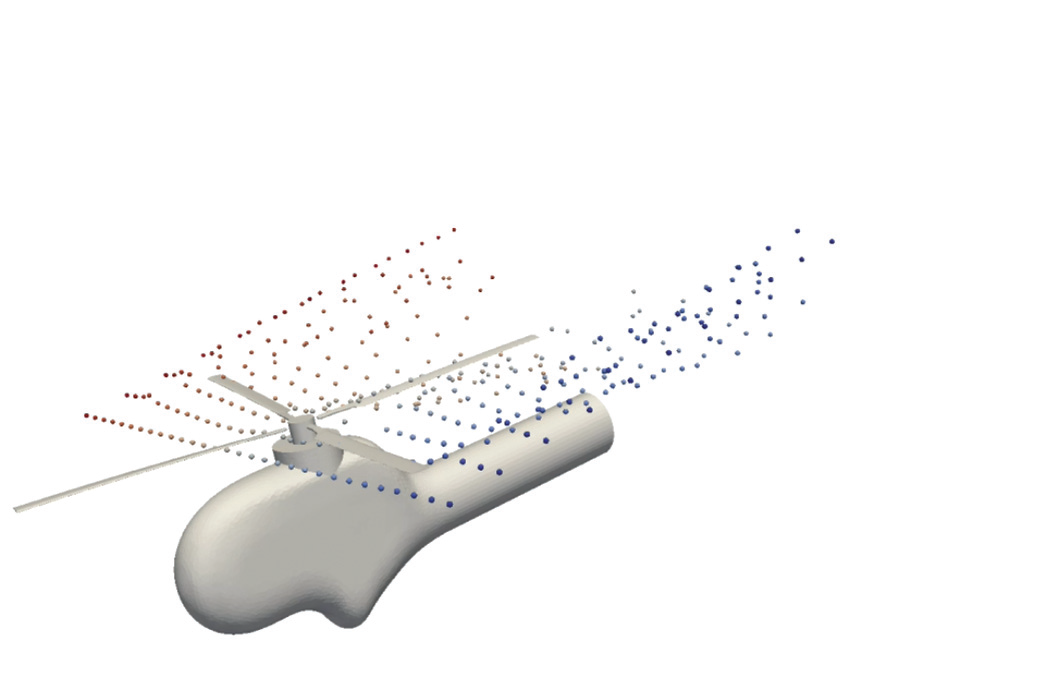

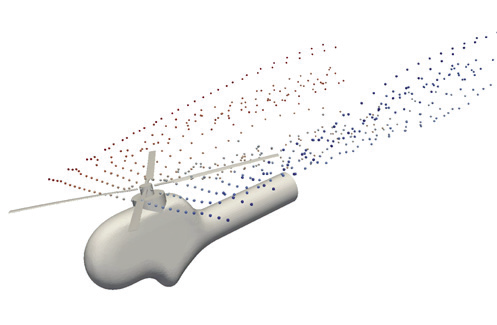

HELIOS ran with an experimental configuration called Higher-harmonic control Aeroacoustics Rotor Test II (HART-II). Many modelers and code developers find this configuration interesting, as it contains a good validation database. The setup for the simulation included a total of 14,400 time steps on 576 Message Passing Interface (MPI) processes on a Cray XC30 supercomputer. The setup process divided the simulation into two separate run stages. Each run stage simulated 7,200 time steps. Both run stages required roughly 38 hours of wall-clock run time.

In situ computations began at time step 720 and included analyses for the particle paths and the rotorcraft geometry. The vtkInSituPParticlePathFilter filter class injected 30 particles at this time step and at every 720th time step that followed. At each time step, the paths of the injected particles updated.

A separate set of in situ operations wrote out the particle path locations and the rotorcraft geometry at every 72 time steps. This means that, through ParaView Catalyst, the simulation output results at 191 time steps. The simulation also wrote out the full grid twice (at time steps 0 and 4,320).

The total in situ compute time amounted to 54 minutes during the first run stage and 66 minutes during the second run stage. For comparison, the output size of the particle data came to 510 MB (an average of 2.7 MB per output time step), the output size of the rotorcraft geometry came to 7.4 GB (an average of 39 MB per output time step), and the output size of the full data came to 24 GB (an average of 12 GB per output time step). While HELIOS may not classify these as large simulations, they certainly demonstrate the power of in situ computation to reduce the amount of data that analysts must write to disk.

ParaView 5.1.2 contains an example problem that analysts and others can use to compute particle paths in situ. To access the example, go to the “Examples/Catalyst/CxxParticlePathExample” source sub-directory. To watch a video on in situ computation with ParaView Catalyst, visit https://vimeo.com/182893083.

Approved for public release; distribution unlimited.

Review completed by the AMRDEC Public Affairs Office (PR2502, 23 Sept 2016)

References

[1] Kitware, Inc. “ParaView Catalyst for In situ Analysis.” http://www.paraview.org/in-situ.

[2] Bauer, Andrew, Hasan Abbasi, James Ahrens, Hank Childs, Berk Geveci, Scott Klasky, Kenneth Moreland, Patrick O’Leary, Venkat Vishwanath, Brad Whitlock, and E. Wes Bethel. “In Situ Methods, Infrastructures, and Applications on High Performance Computing Platforms.” Computer Graphics Forum 35 (2016): 577–597.

Andrew Bauer is a staff research and development engineer on the scientific computing team at Kitware. He primarily works on enabling tools and technologies for HPC simulations.

Andrew Bauer is a staff research and development engineer on the scientific computing team at Kitware. He primarily works on enabling tools and technologies for HPC simulations.

Andrew Wissink is a researcher in the rotorcraft computational aeromechanics group at the U.S. Army Aviation Development Directorate of Aviation and Missile Research, Development, and Engineering Center (AMRDEC). He is also the lead developer of HELIOS.

Andrew Wissink is a researcher in the rotorcraft computational aeromechanics group at the U.S. Army Aviation Development Directorate of Aviation and Missile Research, Development, and Engineering Center (AMRDEC). He is also the lead developer of HELIOS.

Mark Potsdam is a researcher in the rotorcraft computational aeromechanics group at the U.S. Army Aviation Development Directorate of AMRDEC. He specializes in complex, multidisciplinary rotorcraft simulations that use HPC.

Mark Potsdam is a researcher in the rotorcraft computational aeromechanics group at the U.S. Army Aviation Development Directorate of AMRDEC. He specializes in complex, multidisciplinary rotorcraft simulations that use HPC.

Buvana Jayaraman is a part of the HELIOS development team. She is responsible for the development of the HELIOS GUI and for the validation of HELIOS.

Buvana Jayaraman is a part of the HELIOS development team. She is responsible for the development of the HELIOS GUI and for the validation of HELIOS.