Why should we spend time writing tests?

The new ITK release 4.11.0 is planned for December, 15th. There are still a few weeks to work on new features and to improve the existing ones. That is, to contribute and to be part of one of the greatest efforts and challenges that has existed since a long time within the open-source community. Developing new features is an essential aspect to make ITK progress at each release, to cross the current borders of scientific computing in terms of image processing. An outstanding aspect of the open-source toolkits that Kitware maintains on behalf of an active and wide community of developers is the quality assurance mechanisms employed. The Insight Segmentation & Registration Toolkit (ITK) and The Visualization Toolkit (VTK) are two heavily tested code libraries. They are heavily tested because the large amount of code they host requires systematic assurance that what worked a couple of weeks ago is still working as expected after contributions from the community. So why should we spend time writing tests? Very easy: because it helps make research reproducible. A nice review of how ITK enables this can be found on an article published on Feb 20, 2014 in the Frontiers in Neuroinformatics journal.

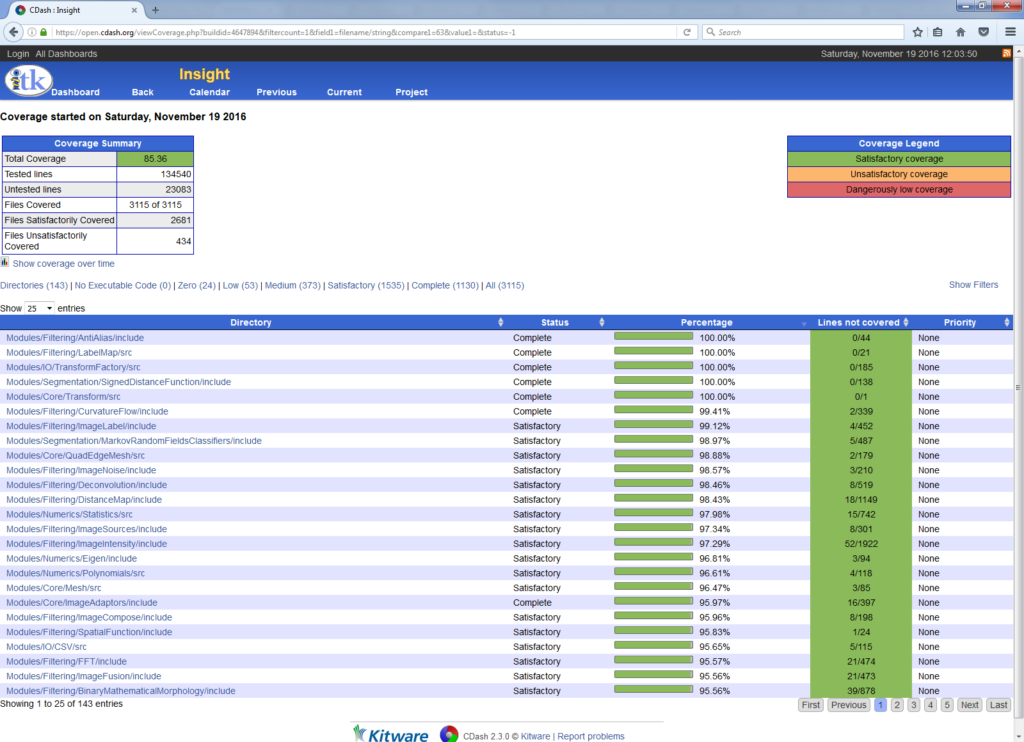

ITK and VTK’s development standards prompt the developers to test the features they contribute with. ITK and VTK set a very high standard for testing the codelines since their inception. Writing test scripts helps developers gain insight about their code, and to find more adequate and efficient ways to expose its interface. Maintaining a simple interface is always useful, and ensuring that no code line is useless, i.e. it gets called for a very specific purpose at some point, is a must. Besides, it also helps identify bugs that may go unnoticed otherwise. The degree of testing a toolkit features is measured in terms of its code coverage, i.e. the percentage of code lines that are tested or effectively called.

I started contributing to improve ITK’s code coverage a while ago. And the experience has been both enriching and rewarding. The advocacy of code coverage and testing has changed the way I see the software development process, and has made me see its relevance. By the time I joined the community, an enormous effort had been made in testing; this is a continuous task, and both ITK’s and VTK’s code coverage figures have still room for improvement. A particularly simple but powerful thought may be applied to this effort: a simple test helps to ensure that the results a reseacher a few thousand miles away gets are consistent and reproducible. Put in another way, these contributions help to avoid errors in real world applications, which can be critical at times.

A quote by Luis Ibáñez at the time I started contributing marked me. It read “The safe assumption is: if it is not tested, it is broken.” Well, since that day, ITK’s code coverage has changed this way:

- Coverage on Feb, 14 2014: 84.1%; coverage on Nov, 16 2016: 85.8%

- This corresponds to an increase in coverage of 6,647 lines of code

- The default ITK CMake configuration options

- GCC, LCov from Debian Jessie Linux

- The ITK/Utilities/Maintenance/computeCodeCoverageLocally.sh script

This should be enough to get one going, however tough or long the way may be. There is still a long way to go, and it requires lots of endeavor, but making both ITK and VTK the flagships of reproducible and open scientific research is a worthwhile one.