3D Tiles Generation using VTK

The Kitware Danesfield application provides an open source software solution designed to convert multi-view satellite imagery into 3D mesh models of buildings atop a separate terrain mesh. Recently we have extended Danesfield to operate on other sources of input such as point clouds from LIDAR or full motion video (FMV) generated by a drone.

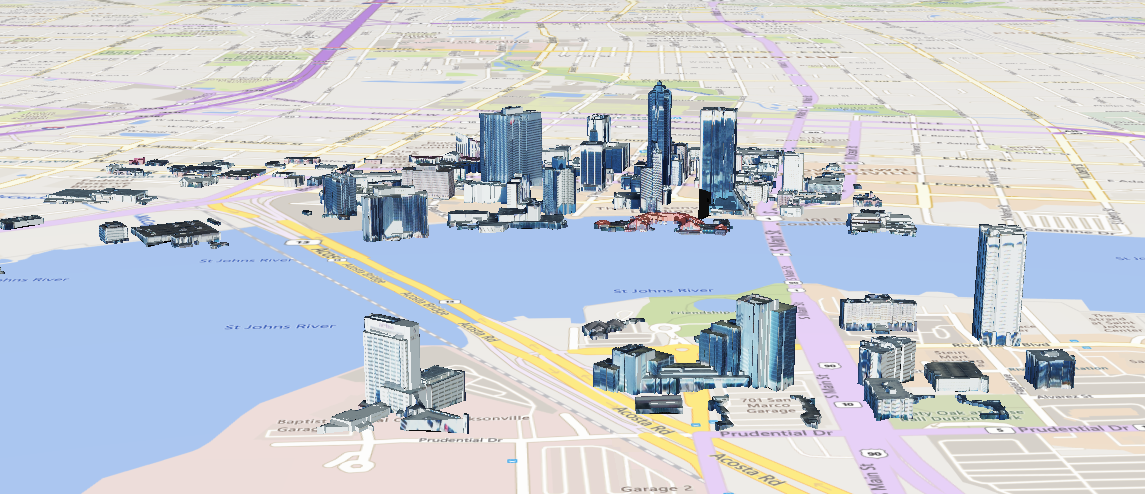

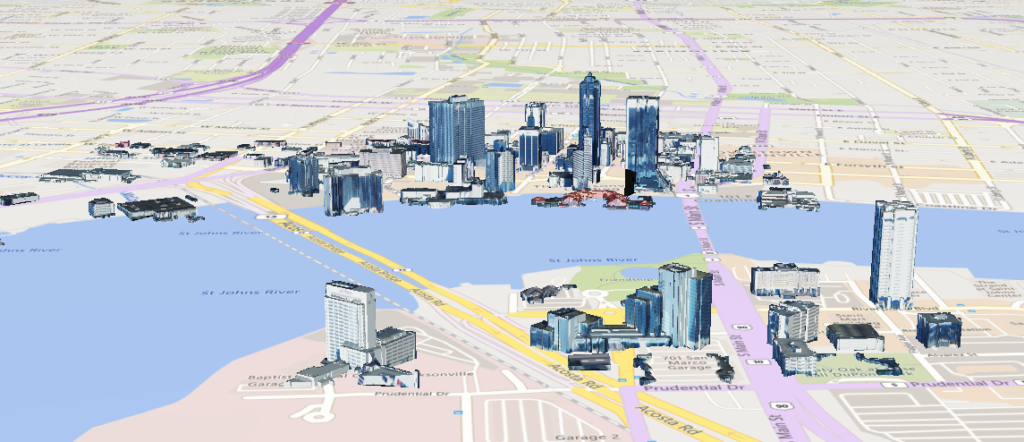

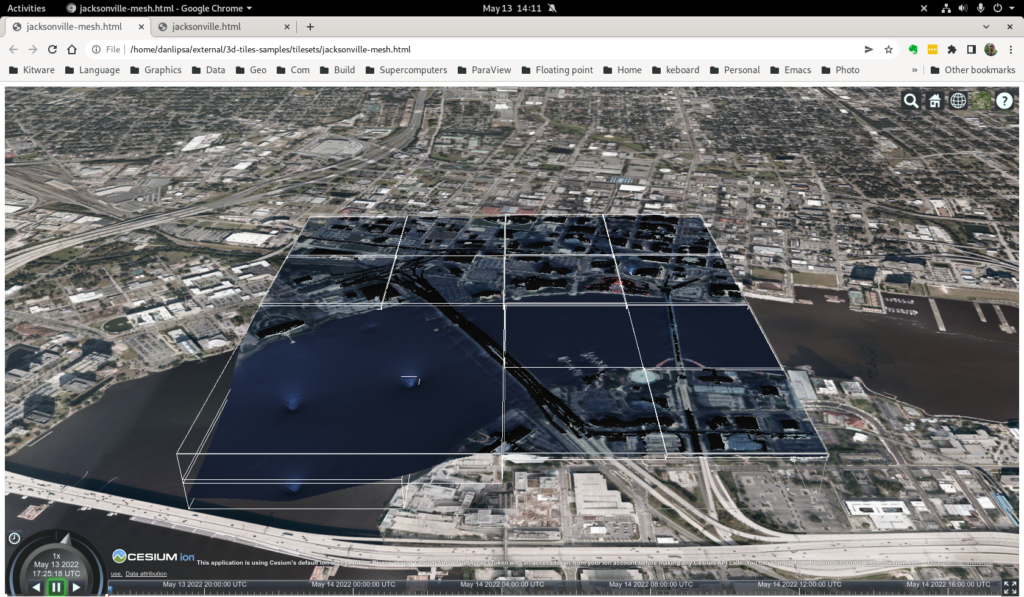

We have also updated Danesfield to package the 3D mesh models using the 3D Tiles standard so that they can be efficiently streamed and rendered in a web client. Notably, Cesium has released 3D Tiles as an open standard and the popular Cesium.js web client as open source software to decode and render 3D Tiles. However, only a few free and open-source tools exist for encoding content into the 3D Tiles standard. This blog post describes additions to VTK and Danesfield that enable conversion of meshes or point clouds into the 3D Tiles format using a fully open source pipeline that is fast and efficient.

The 3D Tiles standard is designed for streaming and rendering massive 3D geospatial content in a client, typically a web browser. It supports streaming textured terrains and surfaces, 3D Building interiors and exteriors and point clouds. We support all these types of input data in our encoder. The encoder does not support additional data types described by the standard, such as 3D model instances (trees, vegetation, lamp posts) and composite content.

Results

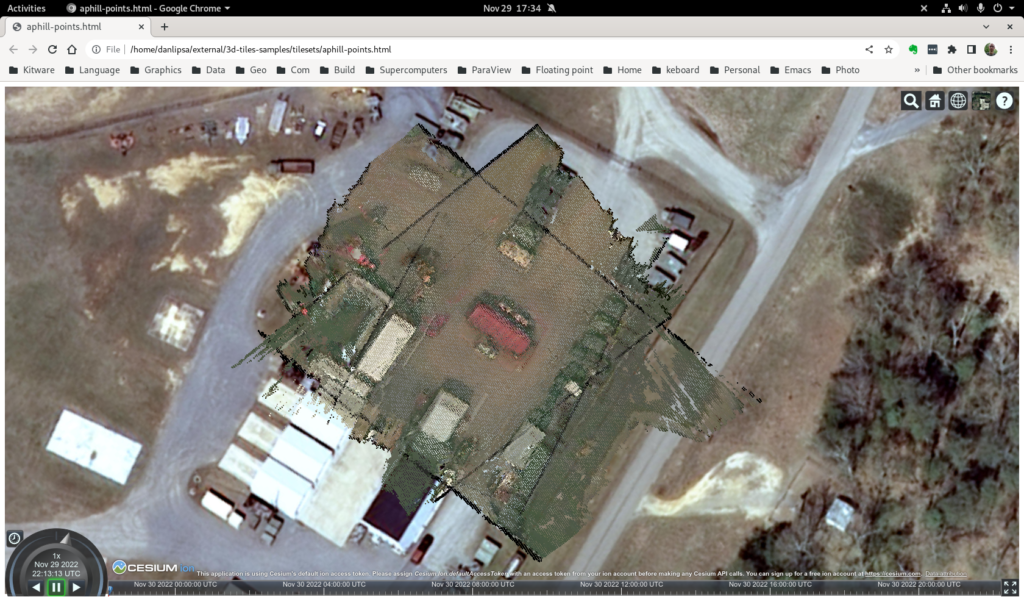

We developed a python conversion utility that uses VTK to read input data and convert it to 3D Tiles. The input can be either a textured terrain or surface (see Figure 2), a list of textured buildings (see Figure 3), or a point cloud (see Figure 4). We also allow users to associate property textures with a mesh, for instance, to encode the uncertainty in computing the point cloud or the mesh (see Figure 5).

Contributions

This work includes the following contributions to the Danesfield and VTK projects.

The Danesfield project includes a python script tiler.py that drives the 3D Tiles encoding process. It reads the input files (we support OBJ, CityGML, las, laz, vtp – we can easily add any format supported by VTK), quantizes any texture properties and stores them in channels of RGB textures and calls vtkCesium3DTilesWriter to do the conversion. tiler-test.sh shows how to use various options available.

The VTK library includes vtkCesium3DTilesWriter, which is used to save a mesh, list of buildings, or point cloud in 3D Tiles format. It supports EPSG codes to specify the input dataset’s Spatial Reference System (SRS). It also enables splitting the input mesh and texture into tile meshes and textures (for large mesh inputs), merging meshes and textures in a tile to improve performance (for buildings input), and multiple output formats (B3DM, PNTS, GLTF, GLB).

To support this work, we also upgraded libproj used in VTK from version 4.9.3 to version 8.1.0 and developed vtkGLTFWriter.

Example

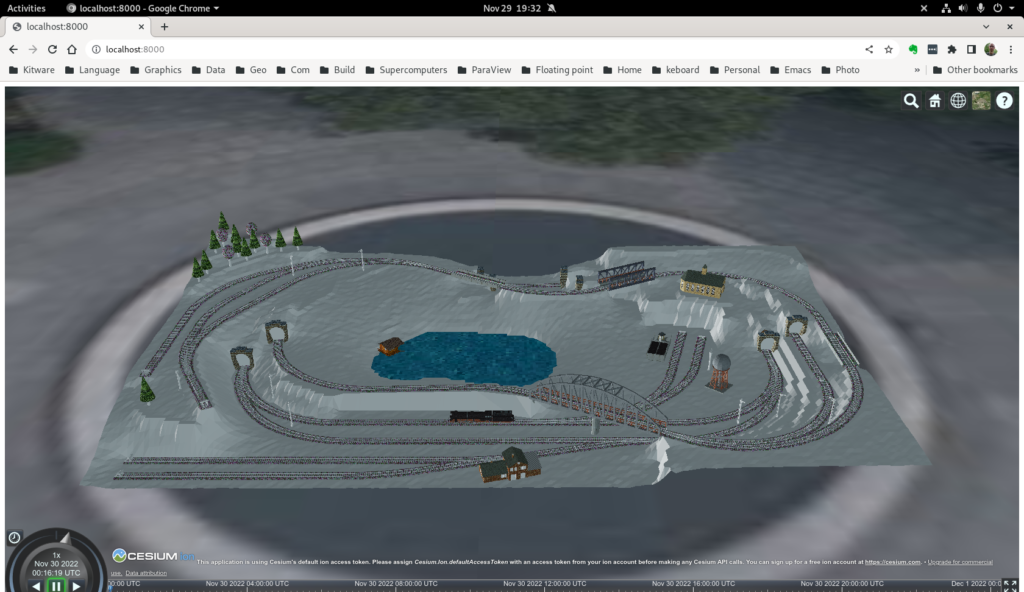

As a sample input, we use a public test dataset, called “Railway”. Railway is a 3D model demonstrating nearly all CityGML feature classes from the CityGML 2.0 specification. The dataset was created manually and represents an artificial scene. Coordinates are not georeferenced and the scene is downscaled to around 1:220.

To convert this data to 3D tile using python and a build of VTK we execute:

PYTHONPATH=~/projects/danesfield:~/projects/VTK/build/lib/python3.8/site-packages/ python ~/projects/danesfield/tools/tiler.py ~/data/citygml/CityGML_2.0_Test_Dataset_2012-04-23/*.gml -o toycity --utm_zone 18 --utm_hemisphere N -t 2 --translation 486981.5 4421889 -10 --lod 3 --content_gltf

Note we set PYTHONPATH to refer to both the danesfield and VTK python classes. VTK needs to be built with python bindings and with the GDAL and PDAL modules and python also needs access to GDAL and PDAL. Because the data is not georeferenced we place it, through command line parameters, to a location that forms an appropriate background (a fountain in a Philadelphia park) (--utm_zone 18 --utm_hemisphere N -t 2 --translation 486981.5 4421889 -10). We read data from CityGML LOD 3 (--lod 3) and generate data as GLB (--content_gltf) and we specify that we want at most 2 buildings per tile (-t 2).

Alternatively, we can use kitware/danesfield docker image and we don’t have install python or compile VTK on the test computer. After downloading the docker image (docker pull kitware/danesfield) the command needed to convert the test data to 3D Tiles is:

docker run -it -v ~/data:/mnt -v `pwd`:/workdir kitware/danesfield 'source /opt/conda/etc/profile.d/conda.sh && conda activate tiler && PYTHONPATH=danesfield python danesfield/tools/tiler.py /mnt/citygml/CityGML_2.0_Test_Dataset_2012-04-23/*.gml -o /workdir/toycity --utm_zone 18 --utm_hemisphere N -t 2 --translation 486981.5 4421889 -10 --lod 3 --content_gltf'

In either case data will be generated in the toycity subdirectory. To view the data, copy index.html to toycity subdirectory, start a web server in that subdirectory (cd toycity; python -m http.server) and then go to http://localhost:8000/ in your browser (see Figure 6)

Implementation details

Our writer uses an octree to organize the data. The points stored in the octree represent different features depending on the input data type: 1) they represent centers of buildings if the input type is a list of buildings; 2) centers of faces if the input data is a textured mesh or actual points in the data if the input data is a point cloud. After organizing data in the spatial data structure, we save the tree structure in a JSON file with additional attributes such as the bounding volume and the geometric error. We save the content of each tile in a binary GLTF format. The tile’s content differs depending on the input type: buildings for list of buildings input type faces for mesh input type, and points for point cloud input type.

We support different file types for saving a tile: B3DM for buildings and mesh input (using an external program to convert from GTLF to B3DM), PNTS for point cloud input, and GLTF or GLB for all input types. While B3DM and PNTS are the default file formats supported by the 3D Tiles specification, GLTF and GLB file formats are enabled by the 3DTILES_content_gltf extension of 3D Tiles. The bounding volume for a tile that tightly encloses its content is propagated to all the nodes in the tree using a post-order traversal.

Because GLTF supports only 4-byte floating point values, we added the ability to store mesh vertices relative to an origin and store the translation to the origin as 8-byte floating point. This allows us to store geocentric (ECEF) coordinates in the GLTF files, which is needed when storing 3D Tiles data.

Future work

For this work, we focused on supporting all data types used in Danesfield, either as input, intermediate results, or output:

- Point clouds, meshes, and buildings.

- Supporting property textures that are used to represent error covariance data applied to meshes.

- Eliminating the need to use external scripts such as converting from GLTF to GLB or B3DM.

For future work, possible improvements include:

- Increasing the size of the datasets processed by using out-of-core processing.

- Increasing the speed of processing.

- Decreasing the transmission time and improving rendering performance in Cesium.

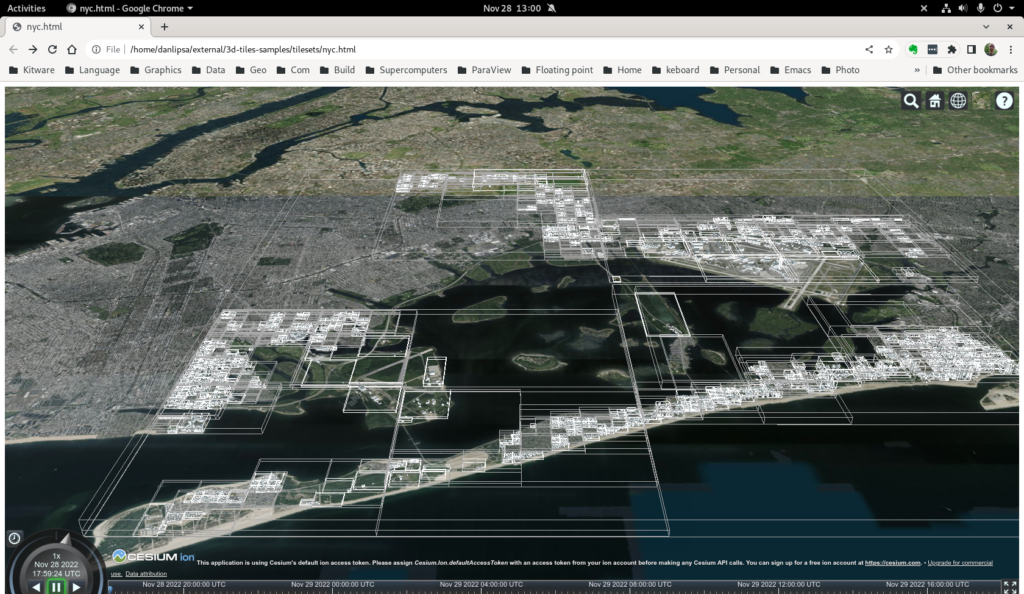

To process data out of the core, we plan to take advantage of the VTK streaming capabilities, which allow us to read and convert to 3D Tiles only a chunk of data at a time and then create a top-level tileset that refers to all chunk tilesets. This enhancement will allow us to encode very large datasets, such as NYC, that we could not convert in entirety with the current version of the software.

To increase the processing speed, we will identify areas that can be parallelized and use vtkSMP to take advantage of the multi-core architectures available on modern computers. For decreasing the transmission time, we can use mesh/point cloud simplification, binary data compression that works at the GLTF bufferView level (EXT_meshopt_compression), mesh compression (KHR_draco_mesh_compression), and texture image compression (KHR_texture_basisu using texture images saved as KTX v2 instead of PNG or JPG.

Furthermore, mip-map levels are possible with this scheme, improving rendering speed as well). To improve rendering performance and user experience, we can use multiple levels of detail (LOD) by using simplified meshes (for mesh input) or significant buildings or simplified meshes for buildings (for buildings input) on nodes higher in the tree.

Hello, do you have a working example, possibly in Python, with explanation of parameters needed by vtkCesium3DTilesWriter?

PS: the example is not clear since first it imports new objects and then immediately exports them, but what are the options in case you have a collection of standard polydata (maybe wit some of them textured?)

Thanks!

In this file we list a number of tests for some of the data we have:

https://github.com/Kitware/Danesfield/blob/master/tools/tiler-test.sh

tiler –help

would describe all options available. If you have further questions, please post them on VTK Discourse

Thanks!

I have already opened a topic on Discourse:

https://discourse.vtk.org/t/vtkcesium3dtileswriter-explanation-of-parameters/11622

Hello, are you still active on that project ? I would like to exchange with you to see how to build an end-to-end open source framework from 3D production to 3D tiles server and end user applications using 3D Tiles.

Here is some recent work on this: https://www.kitware.com/unlocking-geospatial-insights-integrating-3d-tiles-and-geotransforms-into-paraview-for-seamless-geospatial-data-processing/

VTK discourse would be a good place to discuss this.