- Webinar

- April 15, 2026

- 12-1pm ET

How to Stress-Test Your AI Models Without Collecting New Data

A practical walkthrough of installing, configuring, and applying NRTK to systematically evaluate model performance under controlled perturbations.

AI models rarely fail in controlled lab environments — they fail in the real world. But collecting real-world edge cases is expensive, slow, and often impractical.

In this hands-on webinar, we’ll show you how to use the open source Natural Robustness Toolkit (NRTK) to evaluate model behavior using synthetic perturbation testing. Instead of gathering new field data, you’ll learn how to systematically apply controlled disturbances to existing datasets to simulate operational conditions.

We’ll walk through:

- Installing and validating the NRTK Python package.

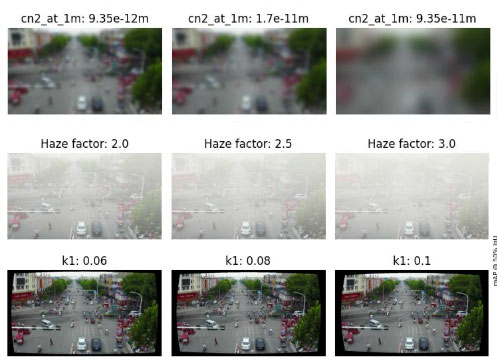

- Running perturbations on sample imagery.

- Configuring controlled parameter sweeps to stress-test model performance.

Whether you’re building computer vision systems or evaluating deployed models, this session will give you a reproducible workflow for testing robustness before real-world failures occur.

You’ll also hear from the NRTK development team about the recent v1.0 release and how the framework supports AI Test & Evaluation (T&E) efforts — with time reserved for live Q&A.

Key Takeaways

- Install and configure NRTK in your own Python environment.

- Apply operationally relevant perturbations to expand existing datasets.

- Design controlled parameter sweeps to measure performance degradation.

- Generate reproducible robustness evaluations without collecting new field data.

- Ask implementation questions directly to the NRTK development team.